Pioneering Accessibility: Early Computing, Screen Readers, and New Tech

Brought together at U-M in the early 1960s, Rackham alumni Jim Thatcher and Jesse Wright went on to create IBM’s first screen readers for blind and low-vision computer users. Over six decades later, a Rackham alum and his faculty mentors are preparing the next leap in accessible computing.

Interdisciplinary Beginnings

Back in the 1950s, when computers were anything but personal, filling up entire rooms and operated by trained professionals using paper punch cards, U-M was a leader in computer science and technology. Yet, there was no “computer science” degree as we know it now. Instead, researchers and scholars interested in computing collaborated under the umbrella discipline of the “communication sciences,” situated inside the College of Literature, Science, and the Arts (LSA).

Within LSA, researchers came together to form Michigan’s Logic of Computers Group, founded in 1956 by Arthur Burks, a philosopher-turned-computer scientist. Like many research collaborations of the time, there was an expectation that scholars in the group essentially replace their own salaries through grants and government contracts, which were plentiful during the Cold War era when a hallmark of our strength as a nation was measured in research output. While government contract work was often a focus for members of the group, Burks’s larger intellectual interests stemmed from the belief that computing was not merely machine-building but instead the study of logical systems, natural and artificial, and that an interdisciplinary approach was vital.

Michigan alumnus Sam Franz (A.B. ’20), a historian of early computing and a current doctoral student at the University of Pennsylvania, describes Burks’s approach as a common one. “Early computing across the United States was a soup of different disciplines,” he says. “At Michigan, the thesis was that computers could solve problems in other fields, so it was best to collaborate with scholars in other fields, including medicine, biology, and psychology.”

According to Franz, this philosophy of deep collaboration was likely influential in creating the foundation for fruitful teamwork between two Rackham alumni and former Logic of Computers Group members: Jim Thatcher (Ph.D. 1963) and his professor-turned-colleague Jesse Wright (MA 1945, Ph.D. ’51). Departing from U-M in 1963, both Thatcher and Wright went on to pursue careers at IBM, where they are credited with the creation of the company’s first screen readers, technology to assist blind and low-vision people with computing. Wright, who was blind, brought critical perspectives, skills, and experience to that work.

“Computing in the 1950s, when Thatcher and Wright were at U-M, was very much about understanding human-computer interaction,” Franz says. “There was no mouse, no keyboard, and no computer monitor as we understand them today. So, working with a mathematician who was blind would mean that the problem of man-machine communication would take different forms as these technologies developed.”

Collaborators Connect

A mathematician and professor, Wright’s research areas at U-M included logical design, programming languages, and the study of abstract machines and the computational problems they can solve.

Wright was also one of the few blind researchers on the U-M campus. In a letter found in the Bentley Historical Library, Burks described how challenging it was for Wright to complete his work independently at Michigan as a blind scholar. His lived experiences created a deep well of expertise to draw from when it came to co-creating accessible technologies at IBM.

Thatcher joined the Logic of Computers Group as a mathematician and research assistant in 1962, working under Burks and Wright. His research was dense, mathematical, and abstract.

Franz notes that it was “placing computing on a mathematical footing,” essentially building mathematical models for how computer logic works.

“In some ways, you can think of the history of computing as marrying the logical design of computing machines and the mathematical problem of computability with physical hardware, with electronic circuits,” Franz says. “The Logic of Computers Group was primarily interested in the former, in the logical design. They cared less about the messiness of computer circuits.”

In the early days of computing, designing computer logic led researchers to address incredibly broad questions: Are computers giant electronic brains, similar to people’s brains? Can they self-reproduce? Can they think?

A Hungarian-American mathematician, physicist, and colleague of Burks, John von Neumann, took up these questions mathematically, finding that a class of logical machines—“automata”—may be able to self-reproduce and adapt to stimuli. Archival correspondence shows that Thatcher worked on von Neumann’s model during his time at U-M. While abstract in nature, von Neumann’s work can be seen today as an early attempt to create an “intelligent” machine.

Screen Readers for All

Once at IBM, Thatcher and Wright confronted a new kind of puzzle: how to allow blind and low-vision users to interact with computers in a fundamentally visual world. The first screen readers were simple by today’s standards, mirroring computer interfaces of the time: The reader would vocalize whatever was present at the cursor, line by line, character by character. As computer interfaces evolved to include more navigation options and graphics, Thatcher and Wright knew that a computer program’s back-end information hierarchy would be most useful to blind users—the visual, line-by-line recitation of text was no longer as helpful.

Thatcher and Wright’s screen readers could present a virtual document or interface as a logical hierarchy, allowing users to interact with different levels—reading a line, a page, a character, or switching between applications.

U-M professor and accessible tech researcher Sile O’Modhrain observes that early screen readers required deep collaboration with application developers.

“The manufacturers of screen readers need to be in close relationship with the developers, as the info in the applications needs to be interpreted by the screen reader,” O’Modhrain says, noting that early models laid the groundwork for the more flexible, multimodal screen readers of today.

Accessibility’s Next Chapter

Sixty-three years later, a collaboration born at U-M between two faculty members and a Rackham alumnus is advancing screen reader technology yet again. NewHaptics is an Ann Arbor tech startup that is the result of years of cross-disciplinary collaboration that started at U-M, with initial support from U-M’s M-Cubed grant and later from the university’s Innovation Partnerships, the nexus for research commercialization at the university. The term “haptic” refers to technology that allows users to interact with computers through their sense of touch.

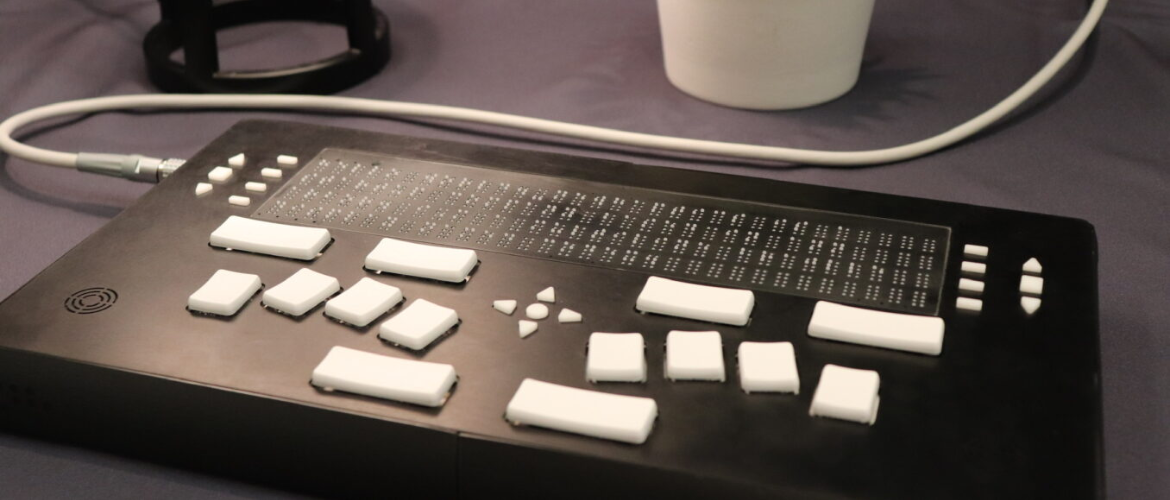

The company is currently poised to release Codex (pictured at the top of this story), a braille display that uses a technology that allows multiple lines of braille dots to be selectively raised and lowered to display tactile graphics as the user’s hands skim the surface of their device. This approach, going beyond a simple line-by-line transcription of text to braille, is especially useful for more complex and large visual items like maps and graphs.

The project’s genesis began in 2012, with Alex Russomanno (Ph.D. ’17), then a Rackham Ph.D. student in mechanical engineering. He joined efforts with professors O’Modhrain and Brent Gillespie—both experts in tactile, haptic, and interface design.

Russomanno reports that Codex enables users to view and navigate digital text/interfaces, including indents, headings, and relationships between content blocks, just as sighted readers do. “We have future product plans for a large-area tactile display that will support more in the graphics realm,” Russomanno says.

Like Wright, O’Modhrain brings deep end-user insight to the project as one of the world’s few blind researchers in haptic interfaces and braille technology. She also acknowledges that she is part of a legacy of tech pioneers with vision impairments.

“Computer programming and coding was a great job for blind people in the early days of computing. Blind programmers were really sought after because they had such good memories for spatial work,” she says.

Behind the scenes, Codex is designed to interface directly with modern screen readers such as Freedom Scientific’s JAWS and Apple’s VoiceOver, leveraging decades of software development already underway. NewHaptics is building the tactile “display monitor” that gives blind users access to digital information at scale.

“It’s not just about reading text. It’s about accessing non-textual information—the maps, the graphics, the layout cues that give context and meaning,” O’Modhrain says.

According to Russomanno, Codex aims to address long-standing accessibility needs. “In many ways, this product is about literacy and providing quality access to information. Blind users have had a narrow digital window for decades.”

Rackham Graduate School